Tue, 10 Dec 2024

Reframing Multi-factor Authentication

In December, I was the victim of a domain hijacking attack in which Google Domains transferred control of all of my domains from my Google account to an attacker's Google account. These domains are used for my personal and business email, and all of my online accounts were associated with my email addresses at these domains. By gaining control of my domains, the attacker was able direct email sent to my domains to a mail server under his control so that he could intercept incoming email for my domains.

With control of incoming email, the attacker was able to gain control of some of the accounts registered using these email addresses. He was able to compromise those accounts that did not have multi-factor authentication enabled, requiring that, after password authentication, a one-time password sent by SMS or a generated time-based one-time password (TOTP) using a shared secret.

As a result of this attack, I have a new perspective on the value of multi-factor authentication. Re-framing how multi-factor authentication is described, in terms of possessions that one is unlikely to concurrently lose control over, makes its value clearer and provides a better way of thinking about the risks of an account being compromised.

A list of recommendations is provided at the end.

Traditional Framing of MFA

The traditional description of multi-factor authentication is a mechanism that requires two things in order to authenticate oneself:

- something the user knows

- something the user has

The "something the user knows" is typically a password. The "something the user has" is often a mobile phone, which either can receive SMS messages or has an application installed such as Google Authenticator, which stores shared keys used to generate one-time codes.

Describing of MFA in these terms is misleading for a few reasons:

- the password is no longer something the user knows

- "something the user has" is too abstract

- suggests there are only two distinct things which the user must have

It is no longer common nor recommended for users to know the password used to access each online service. Most people use tens to hundreds of services. It's not feasible to remember separate passwords for each service. Since users do not have control over how services store passwords, it's also not recommended to use the same password for multiple services. There have been numerous cases of passwords being leaked due to security compromises.

Because it's impractical to know your passwords, a password manager must be used. To make use of a password manager, you will typically want it to be available on the computers you are using regularly. These are probably your laptop/desktop and your phone. Storing your web application passwords in your Google account or your iCloud keychain, for example, keep your passwords where you need to access them.

Similarly, when you need to generate TOTP codes, it makes to do so on your phone or on your computer using an application like 1Password.

Multi-factor authentication, using a password manager and a TOTP generator therefore is better described as "something the user has" (a password manager) and "something else the user has" (a TOTP generator). If both of these are available on the same device, an attacker gaining control of one will likely gain control of the other.

So prior to the attack I underestimated the value of multi-factor authentication because I thought of it primarily in terms of what would happen if an attacker gained control of my computer. If gaining control gave them access to all of the factors needed to access an account, multi-factor authentication wasn't better than one-factor authentication.

Better Framing As Possessions

A better way to think about multi-factor authentication is:

- requiring multiple possessions, the exclusive control over which is unlikely to be lost concurrently

- understanding which possessions are fungible as authentication factors

In the domain hijacking attack, my computer wasn't compromised. None of my account passwords were compromised. None of the keys used to generate TOTP codes were compromised. What was compromised was the possession that can be described as control over incoming email (COIE), and COIE is often equivalent to a password.

Some applications make the equivalence of a password and COIE explicit. Slack, for example, will email you a one-time code to log in by default. (Authenticating using a password is also possible.)

Most other applications will allow you to reset your password if you have COIE, implicitly making the password and COIE equivalent with respect to authentication.

Equivalent Possessions

When authenticating using a service that makes a password and COIE equivalent, one of the required factors is therefore one of two possessions. If your account also has MFA configured to require a TOTP code, you need these two possessions to authenticate:

- password or COIE

- TOTP key

Some services may allow email to be used to receive one-time codes after authenticating using a password. If the service also allows resetting the password through email verification, these two factors are effectively reduced to one.

Similarly, if a service allows you to reset your password by verifying control over incoming SMS and also allows you to receive one-time codes via SMS, two-factor authentication would effectively be reduced to a single factor.

Now consider a scenario in which your passwords are stored in Google Chrome on your laptop computer and one-time passwords are sent via SMS to your phone. This ostensibly requires two distinct possessions to authenticate, your laptop and your phone. But you can inadvertently reduce this to a single possession if your SMS messages are available on your computer through iMessage or Google's Messages web client (messages.google.com), for example. (Google has recently started trying mitigate this risk by displaying messages such as "Use the Google Messages app on your phone to chat with Microsoft" when viewing messages from Microsoft or Google on the Messages web client. The browser notifications still show the full messages so this is apparently still a work-in-progress.)

Additional Considerations

Account Access Chaining

Often, one service will grant access to possessions that can be used as one or more factors to authenticate to another service. For example, if you use Gmail for email and your Google account is compromised, that grants access to the COIE possession used for authentication for many other services.

Delegated Authentication

Some services allow using another service's account to authenticate. For example, a service may allow you to sign in with your Google or Facebook account. There is a trade-off here. If you are using your Facebook to authenticate to multiple other services, and your Facebook account is compromised, the attacker can use it to gain access to all of those other services.

On the other hand, providing robust multi-factor authentication for a service is non-trivial. Facebook certainly devotes more resources to account security than many other services so it may be easier to maintain control of one Facebook account than a multitude of services with their own authentication mechanisms.

Segregation of Possessions

You may also want to segregate your possessions such that you have multiple instances of the same type of possession which are used to access different accounts. For example, you can use one email address for your bank accounts, and a different email address that's used for correspondence and to register for social media accounts or the marketing emails from your e-commerce accounts.

You could use a separate phone to generate your TOTP codes. It can be an old phone that doesn't have service that is kept at your desk. This makes access to your desk, in effect, a required possession for authentication (assuming the recovery codes that are treated as equivalent possessions are printed out and kept at the desk as well). There is a trade-off in that you will only be able to log into these accounts while at your desk, but it may be worthwhile or even desirable for certain types of accounts.

Notification

You want to be notified when one of your factors is used unexpectedly. Even if the second factor fails, you want to be notified if someone enters your password unexpected from a new location, for example.

This is analogous to having a deadbolt lock on your front door, the key to which is factor 1, along with a safe in your home to protect valuables, the code to which is factor 2 (unless you have lots of safes, you probably can keep this password in your head). It's useful to have an alarm on the front door that goes off even if a thief can't successfully break into your safe on their first try.

Recommendations

When evaluating the security of existing online accounts and when setting up accounts with new services, I offer the following suggestions.

- Enable multi-factor authentication, even on accounts that you think no

attacker would want to target

- Are your password manager and phone or authenticator applications likely to be compromised concurrently?

- Test the login process if your password was compromised through shoulder surfing, for example

- Test the "forgot your password" flow

- Pretend someone control control of our phone number through a SIM-swapping attack

- Pretend someone got control of your email inbox

- Use a password manager with long, automatically generated passwords like the one built into Chrome

- It's not necessary to change passwords regularly; a brute-force attack is unfeasible in most situations

- If pairing text messages between your phone and computer, configure the pairing to not persist indefinitely

- Consider using a different email address for important accounts like banking than you use for correspondence and marketing emails

- Consider using a separate device to generate TOTP codes for you accounts you only need to access at home

- Consider deleting email and text messages from account service providers automatically or periodically. If your mailbox compromised, these give the attacker a list of new targets.

- Consider using delegated authentication if available, using your Google or Facebook account

In addition to securing access to your accounts, you may want to take additional precautions in securing your financial accounts. In the US, you can place a fraud alert on your credit file at each of the three main credit reporting agencies. You can do this prior to being a victim of fraud. You have to renew it annually, but it notifies potential creditors to take extra precautions in verifying your identity. You can also put a freeze on your credit reports, which should prevent any new creditors from issuing new credit using your name and social security number until you lift the freeze.

To reduce the likelihood of credit card fraud, you might consider not storing your credit card details with e-commerce sites. Large sites like Amazon will require that credit cards be re-entered when shipping orders to a new address, but smaller retailers may not. As with delegated authentication, consider delegating paying by credit card to Google Pay, Apple Pay, or Amazon Pay when available.

Wed, 12 Jan 2022

A Proposal for NFTs with Actual Property Rights

I'd like to propose a project that makes use of the technology behind NFTs while solving real problems: digital concert tickets

As of early 2022, none of the popular NFT projects provide any actual property rights. The only right associated with each token is the right to transfer the token to someone else. This includes projects like the Bored Apes Yacht Club, where the cost of membership in the yacht club is comparable to lifetime membership at an actual yacht club; you get the exclusivity without access to an actual yacht club.

There are applications where being able to verify and trade property rights in unique assets could potentially be done at lower cost through the technology introduced with blockchains. I've previously argued that efficient replacements for rights licensing organizations like ASCAP and BMI, real estate title search/insurance companies, and ownership or shareholder tracking like MERS or ProxyVote are areas that could be fruitful for exploration.

Any of these would be large a undertaking so I'll propose a more tractable problem in concert ticket NFTs.

Concert tickets have characteristics and associated problems that make them well-suited for being represented as NFTs. They are

- Non-fungible: each ticket grants the right to use a specific seat in a specific venue for a specific duration

- High Value: each one trades at tens or hundreds (sometimes thousands) of dollars

- Existing solutions prone to counterfeiting

- Secondary markets are inefficient

Here's how concert tickets as NFTs would work.

- The venue (or venue's agent) selects a network on which the tickets will be issued

- The venue owner issues one Entrance Ticket as an NFT for each seat at the venue for an upcoming concert

- The venue owner either sets prices for each Entrance Ticket or auctions the tickets

- The Entrance Ticket is transferred to the buyer, completing the initial sale

- The buyer can resell the Entrance Ticket until the end of the concert

- On their way into the venue before the concert, the owner of the Entrance Ticket exchanges with the ticket collector their Entrance Ticket for a Seat Ticket

This solves a few problems with current ticketing solutions.

Buyers can verify that they are buying an authenticate ticket. They would verify that the issuer is the venue owner off-chain. The venue owner should publish the the public key of the issuer. They can simply publish it on their web site, or they could make use of a identity-linking mechanism like Keybase to link their public key to multiple identities such as a twitter profile, a domain in DNS that they own, and a website that they manage to provide buyers greater confidence that they are buying from the legitimate seller.

When one concertgoer wants to buy a ticket from a previous buyer in a secondary transaction, buyers can verify on-chain that the ticket they are buying was originally issued by the venue by verifying the identity of the original issuer.

This solves the problem with buyers potentially buying counterfeit tickets from third-party sellers using Craigslist, for example. It also can reduce the transaction costs associated with buying tickets on the secondary market through trusted intermediaries like Ticketmaster.

Venues, i.e. ticket issuers, also benefit by eliminating the possibility of counterfeiting tickets. Apart from the costs of mediating disputes when two people show up with the same ticket in the form of a bar code or two people claim the same seat number based on a printed ticket or screenshot, there will be a greater demand for tickets from buyers who know that they can resell their tickets. Therefore some of the benefit should accrue to ticket issuers in the form of higher initial ticket sale prices.

Up above, I described how Entrance Tickets are exchanged for Seat Tickets at the door. This solves the double-spend problem. A ticket holder could share their private key with another person, allowing two people to prove ownership of the same ticket if ticket ownership is simply checked at the door.

This may be minor problem, but since the tickets need to be checked at the door anyway, we can have the ticket collector and ticket holder perform a trade on-chain at the door.

The ticket holder transfers their Entrance Ticket for a Seat Ticket. The Seat Ticket is issued for the same seat at the same venue for the same duration, but is not valid for entering the venue. Now, a second holder of the private key can no longer enter the venue, but the ticket holder can still ensure show the usher which seat they own for the show.

A note about implementation:

At the moment, transaction costs on the popular networks for trading NFTs would make

secondary sales and my proposed Entrance-Ticket-for-Seat-Ticket transactions cost

prohibitive so there's work to be done. There are nascent

NFT projects on the stellar and ripple networks, where transaction settlement

time and costs are low. It may be worth exploring funding options from the Stellar Enterprise Fund or Ripple's Creator Fund.

Fri, 07 Jul 2017

Cast Iron Studio 7 on Linux

There's a shell script on the IBM DeveloperWorks forum describing how to run Cast Iron Studio on Linux, but it's from 2010. This describes the steps I followed to get Cast Iron Studio 7.5 running on Linux.

Download the latest version of Cast Iron Studio, 7.5.1.0 as of 2017-07-07: http://www-01.ibm.com/support/docview.wss?uid=swg24042011

The installation file is distributed as a Windows executable self-extracting zip file. The paths within the zip file contain backslashes rather than path-independent directory delimiters so the zip file needs to be fixed up before extracting it.

Use Info-ZIP's zip to strip the self-extracting stub from the zip file and to fix the structure:

$ zip -F -J 7.5.1.0-WS-WCI-20160324-1342_H11_64.studio.exe --out castiron.zip

Then use 7z's rename function to convert the backslashes to slashes within the archive.

$ 7z rn castiron.zip $(7z l castiron.zip | grep '\\' | awk '{ print $6, gensub(/\\/, "/", "g", $6); }' | paste -s)

Now you can unzip castiron.zip.

Finally, here's the modified version of the shell script I found above used to start Cast Iron Studio. Put it in the directory where you unzipped castiron.zip.

#!/bin/bash # Tell Java that the window manager is LG3D to avoid grey window /usr/bin/wmname LG3D THIS=$(readlink -f $0) DIST_HOME=$(dirname "$THIS") cd "$DIST_HOME" LIB_PATH="" LIB_PATH=$LIB_PATH:resources for f in `find plugins -type f -name '*.jar'`; do LIB_PATH=$LIB_PATH:$f done export OSGI_FRAMEWORK_JAR=org.eclipse.osgi_3.10.1.v20140909-1633.jar export SET MAIN_CLASS=org.eclipse.core.runtime.adaptor.EclipseStarter export IH_LOGGING_PROPS="resources/logging.properties" export ENDORSED="-Djava.endorsed.dirs=endorsed-lib" export KEYSTORE="-Djavax.net.ssl.keyStore=security/certs" export TRUSTSTORE="-Djavax.net.ssl.trustStore=security/cacerts" export LOGIN_CTX="-Djava.security.auth.login.config=security/httpkerb.conf" export JAAS_CONFIG="-Djava.security.auth.login.config=security/ci_jaas.config" export JVM_DIRECTIVES="-Dapplication.mode.studio=true -Djava.util.logging.config.file=$IH_LOGGING_PROPS -Dcom.sun.management.jmxremote -Djgoodies.fontSizeHints=SMALL -Djavax.xml.ws.spi.Provider=com.approuter.module.jws.ProviderImpl "-Dinstall4j.launcherId=10" -Dinstall4j.swt=false" export JAVA_HOME=/usr/lib/jvm/ibm-java-x86_64-80 "$JAVA_HOME/bin/java" -client $ENDORSED $KEYSTORE $TRUSTSTORE $LOGIN_CTX $JAAS_CONFIG $YP_AGENT $PROFILINGAGENT $JVM_DIRECTIVES -Dcom.approuter.maestro.opera.sessionFactory=com.approuter.maestro.opera.ram.RamSessionFactory -Dcom.sun.management.jmxremote -Djgoodies.fontSizeHints=SMALL -Xmx2048M -Xms512M -Xbootclasspath/p:$LIB_PATH -jar $OSGI_FRAMEWORK_JAR

Update JAVA_HOME to the path to your jvm. Performance seems to be better with the IBM jvm than the Oracle jvm.

Thu, 24 Sep 2015

How To Send Mail Safely Using PHP

There are a growing number of spammers exploiting PHP scripts to send spam. Such scripts are often simple "Contact Us" forms which use PHP's mail() function. When using the mail() function, it is important to validate any input coming from the user before passing it to the mail() function.

For example, consider the following simple script.

<?php $to = 'info@example.com'; $subject = 'Contact Us Submission'; $sender = $_POST['sender']; $message = $_POST['message']; $mailMessage = "The following message was received from $sender.\n\n$message"; mail($to, $subject, $mailMessage, "From: $sender"); ?>

Such a script looks fairly innocuous. The problem is that sender variable sent from the client is not sanitized. By manipulating the value sent in the sender variable, a malicous spammer could cause this script to send messages to anyone.

Here's an example of how such an attack might be carried out.

curl -d sender="spammer@example.com%0D%0ABcc: victim@example.com" \

-d message="Get a mortgage!" http://www.example.com/contact.php

Now, in addition to being sent to info@example.com, the message will also be

sent to victim@example.com.

The solution to this problem is to either not set extra headers when using

mail(), or to sanitize all data being sent in these headers. A simple example

would be to strip out all whitespace from the sender's address.

$sender = preg_replace('~\s~', '', $_POST['sender']);

A more sophisticated approach might be to use PEAR's Mail_RFC822::parseAddressList()

to validate the address.

tech » mail | Permanent Link

Debugging Salesforce Apps with Flame Graphs

Salesforce applications can sometimes be difficult to debug. The debug log often has the information you need, but finding it isn't always easy.

For example, in a recent project, I needed to figure out why performing an action from a Visualforce page was causing more SOQL queries than expected, generating warning emails as we neared the governor limit. This was a large application that had been under development for about eight years; many different developers had been involved in various phases of its development.

As with many Salesforce applications that have been through many phases of development, it wasn't obvious how multiple triggers, workflow rules, and Process Builder flows were interrelated.

It was easy enough to figure out which SOQL queries were running multiple times by grepping the debug log, but it wasn't obvious why the method containing the query was being called repeatedly.

In researching ways to analyze call paths, I found Brendan Gregg's work on flame graphs, which visualizes call stacks with stacked bar charts. (You might also be familiar with Chrome's flame charts.)

Visualizing the call path through stacked bar charts turned out to be a good way to make sense of the Salesforce debug log.

So I created Apex Flame to visualize Salesforce debug logs using flame charts or flame graphs.

Here's an example based on the debug log generated from running one of the tests included in the Declarative Lookup Rollup Summaries tool.

You can drill in by clicking on a statement, or click on Search to highlight statements that match a string.

I'd love to get some feedback from other Salesforce developers. Try out Apex Flame, and let me know what you think.

As for the debugging issue that initially inspired the creation of Apex Flame, it turned out to be the combination of a trigger that wasn't checking whether the old and new values of a field were different, causing it to run code unnecessarily, combined with a workflow rule that was causing the trigger to be called multiple times.

Another recent case in which it turned out to be helpful was in troubleshooting

a process builder flow that was failing to update child records as expected.

The flow assumed that if the parent record is new (ISNEW()) that

it didn't have child records. It turns out this isn't necessarily true because

a trigger on the parent object can create child records, and the flow runs

after the after insert trigger. The order

of execution is documented, but visualizing the execution order made it

clear what was causing the unexpected behavior.

tech » salesforce | Permanent Link

Tue, 11 Nov 2014

Net Neutrality

Thinking past hop 1

I had a great idea a few days ago, to start a website streaming cat videos called Petflix. I rented a server for $10/month that gave me 1Mbps of outbound bandwidth.

Growth has been exponential. I now have 20 customers that now pay me $1/month for streaming cat videos. Unfortunately, during peak cat video viewing hours, I've started getting complaints that the video streams are choppy.

Each stream uses 100kbps. So when I get more than 10 concurrent viewers, I max out my bandwidth, and packets start getting dropped.

I could buy another 1Mbps of bandwidth for $10/month, but that would make my website unprofitable. So I came up with a brilliant idea that would keep this operation going.

I have an old computer in my basement that I'm not using. I called up Comcast and offered to send them my computer, preloaded with all of my cat videos. They could install it in their data center, and I would configure my DNS servers to send all of my traffic originating from Comcast customers to the computer in their data center. Since 90% of my customers are on the Comcast network, I can grow my website without incurring any additional bandwidth costs.

Obama on net neutrality

The recent statement by Barack Obama on net neutrality suffers from the same error that most proponents make in arguing for net neutrality. It assumes away the problem of how the internet actually works.

Packets don't magically appear on an ISPs network, ready for the ISP to either send on to a customer, or throttle or block for some nefarious reason.

The Internet is a big interconnected network with lots of different agreements for connecting two networks together. As the owner of Petflix, I pay Tektonic for connecting my virtual server (which I also pay them for) with their network. Tektonic pays to connect their network with TierPoint (and for power and space in a data center). TierPoint pays to connect their network with Level 3. Level 3 and Comcast connect their networks, but the terms are contentious. And as a customer of an ISP, I pay for connecting my home network with Comcast.

Traditionally, large networks like Comcast and Level 3 would form settlement-free peering agreements. Both parties get about equal value out of connecting their networks, and it was deemed mutually beneficial to connect their networks without one party paying the other. Netflix started sending lots of traffic over the connection between Level 3 and Comcast, and Comcast no longer thinks they are getting equal value from peering, and wants to renegotiate so Level 3 pays them.

To be fair, Obama sort of recognizes that the problem isn't just between the ISP and their customers. One of his bullet points argues that net neutrality should apply between an ISP and other networks.

- Increased transparency. The connection between consumers and ISPs - the so-called "last mile" - is not the only place some sites might get special treatment. So, I am also asking the FCC to make full use of the transparency authorities the court recently upheld, and if necessary to apply net neutrality rules to points of interconnection between the ISP and the rest of the Internet.

But if we apply the other rules of No Blocking, No Throttling, and No Paid Prioritization to the connections between ISPs and other networks, it's unclear what kind of arrangements would be legal. Would the government have to mandate the amount of bandwidth at these interconnects?

What We Talk About When We Talk About Bandwidth

Getting back to my parable, when a customer of Comcast pays for 20Mbps of bandwidth, and they can't even get 100kbps of throughput to Petflix, whose fault is it?

Is it Comcast's responsibility to ensure that there is enough bandwidth through Level 3, TierPoint, Tektonic, and Petflix?

Is it Petflix's responsibility?

Fortunately, we have a pretty good way of sorting it out: markets. If Petflix is so important to Comcast customers that Comcast will lose customers to competitors by not providing a satisfying cat video viewing experience, Comcast will likely work to ensure sufficient bandwidth is available, unless the cost to do so makes those customers unprofitable.

If Petflix customers start cancelling their subscriptions because they can't enjoy my videos, I will probably pay to ensure sufficient bandwidth is available, unless doing so makes the operation no longer profitable.

Net Competition

I'm sure readers will object that Comcast is nearly a monopoly in most markets, and therefore markets won't solve the problem. And I agree. So instead of championing net neutrality, I encourage readers to champion net competition.

Instead of adding federal regulation on top of local regulation which grants

ISPs like Comcast monopolies, deregulate the market in ISPs. In Portland,

there could be three

high-speed ISPs competing for consumers' business soon.

Nobody knows what amount of bandwidth should exist between the millions of

connections between networks, and who should pay how much to whom for these

connections. Only the market can sort it out.

Obamacare for the Internet?

Senator Ted Cruz referred to net neutrality as Obamacare for the internet. Is that a valid analogy?

"Net Neutrality" is Obamacare for the Internet; the Internet should not operate at the speed of government.

— Senator Ted Cruz (@SenTedCruz) November 10, 2014I would consider these the primary characteristics of Obamacare:

- Additional regulation of heavily regulated industry

- New tax on uninsured taxpayers

- Subsidies for low income residents

- New government-run market for insurance

Obamacare doesn't seem like a great analogy for net neutrality. Both do add federal regulation on top of local/state regulation. And it's not inconceivable that if the FCC were to start regulating ISPs in the way that Obama suggests, that problems caused would lead to suggestions for more government "solutions" like government-run markets for network connectivity, but that's a bit of a stretch.

With a little research, Cruz probably could have come up with an act of Congress that would make a better analogy than the ACA.

Fri, 31 Oct 2014

Promises are Useful

Henrik Joretag tweeted that he doesn't like promises in javascript.

I’m just gonna say it:

I really don’t like promises in JS.

It’s a clever pattern, but I just don’t see the upside, sorry.

— Henrik Joreteg (@HenrikJoreteg) October 26, 2014I've been working on a large javascript application for the past two years, and I've found promises to be invaluable in expressing the dependencies between functions.

But Henrik's a smart guy, having authored Human Javascript and written lots of Ampersand.js, so I decided to look at a recent case where I found the use of promises helpful, and try to come up with the best alternative solution.

The Backstory

I recently discovered a bug in our app initialization. It's an offline-first mobile app. We have a function that looks like this:

App.init = function() {

return Session.initialize()

.then(App.initializeModelsAndControllers)

.then(App.manageAppCache)

.then(App.showHomeScreen)

.then(App.scheduleSync)

.then(_.noop, handleError);

};

When the app starts up, it needs to:

- Load some user info from the local datastore, if possible; otherwise, from the network

- Load metadata about the models used in the app and initialize controllers

- Set up polling for application cache updates and event handles to appcache events, to prompt the user to restart when a new version of the app is available, for example

- Show the initial application screen

- Schedule a sync of metadata and data

- and if an error occurs during any of these steps, handle it gracefully

The chaining of promise-returning functions in App.init nicely expresses the

dependencies between these steps.

We need valid session info for the user before we can initialize the models,

which may require fetching data over the network.

We can't show the initial home screen until the controllers have been

initialized to bind to DOM events and display information from the models.

If there's a new version of the app available, we want to prompt the user to

restart before they start using the app, and we don't want to schedule a sync

until the app is being used.

Unfortunately, during a recent upgrade some users ran into a bug in some code I

wrote to upgrade the local database, called from

App.initializeModelsAndControllers. The result was an uncaught javascript

error, Uncaught RangeError: Maximum call stack size exceeded, on

the initialization screen which is displayed before App.init is called.

I fixed the bug which broke upgrading some databases, but I also realized that the dependency between App.initializeModelsAndControllers and App.manageAppCache should be reversed. If there's an upgrade available, we want users to be able to restart into it before initializing the app further (and running more code with a larger surface area for possible bugs).

The diff to make this change with our promise-returning functions is nice and simple:

--- a/app.js

+++ b/app.js

@@ -1,7 +1,7 @@

App.init = function() {

return Session.initialize()

- .then(App.initializeModelsAndControllers)

.then(App.manageAppCache)

+ .then(App.initializeModelsAndControllers)

.then(App.showHomeScreen)

.then(App.scheduleSync)

.then(_.identity, handleError);

(The careful reader will notice that stuck users need a way of upgrading the

broken app stored in the app cache. The cache manifest gets fetched

automatically to check for and trigger an app update each time the page is

loaded, so even though we haven't set up an event handler to prompt the user to

restart after the update has been downloaded, if the user waits a few minutes

to ensure that the app update is complete, then kills and restarts the app, the

new version will be loaded.

The careful reader who happens to be a coworker or affected customer will note

that this didn't actually work because our cache manifest requires a valid

session and our app starts up without refreshing the OAuth session to speed up

start-up time. An upcoming release of GreatVines

app to the iOS app store will contain a new setting to trigger the session

refresh at app initialization. Affected customers have been given a

work-around that requires tethering the device to a computer via USB.

Developers using the Salesforce Mobile SDK are welcome to email me for more

info.)

Recapping the Question

App.init = function() {

return Session.initialize()

.then(App.initializeModelsAndControllers)

.then(App.manageAppCache)

.then(App.showHomeScreen)

.then(App.scheduleSync)

.then(_.noop, handleError);

};

Now, back to the issue at hand. What would this function look like using an alternative to promises. Ignoring the possible use of a preprocessor that changes the semantics of javascript, the two likely candidates are callback passing and event emitter models.

The typical callback hell approach looks something like this:

App.init = function(success, error) {

Session.initialize(function() {

App.manageAppCache(function() {

App.initializeModelsAndControllers(function() {

App.showHomeScreen(function() {

App.scheduleSync(success, error);

}, error);

}, error);

}, error);

}, error);

};

For simplicity, I've ignored the fact that the original version both had some error-handling and returns the error to the caller through a rejected promise, but I'm pretty sure this is not what Henrik had in mind. So what might an improved callback-passing approach look like?

App.init = function(success, error) {

var scheduleSync = App.scheduleSync.bind(App, success, error);

var showHomeScreen = App.showHomeScreen.bind(App, scheduleSync, error);

var initializeModelsAndControllers = App.initializeModelsAndControllers.bind(App, showHomeScreen, error);

var manageAppCache = App.manageAppCache.bind(App, initializeModelsAndControllers, error);

Session.initialize(manageAppCache, error);

};

By pre-binding the callbacks, we avoid the nested indentations, but we've reversed the order of the dependencies, so that's not a great improvement.

I've failed to come up with a good callback-passing alternative to promises, so let's look at what event emitter approach would look like.

App.init = function() {

BackboneEvents.mixin(App);

App.once('sessionInitialized', App.manageAppCache);

App.once('appCacheManaged', App.initializeModelsAndControllers);

App.once('modelsAndControllersInitialized', App.showHomeScreen);

App.once('homeScreenShown', App.scheduleSync);

var initialized = App.trigger.bind(App, 'initialized');

App.once('syncScheduled', initialied);

App.once('sessionInitializationError appCacheManagementError modelsAndControllersInitializationError homeScreenError syncSchedulingError', function(error) {

App.off('sessionInitialized', App.manageAppCache);

App.off('appCacheManaged', App.initializeModelsAndControllers);

App.off('modelsAndControllersInitialized', App.showHomeScreen);

App.off('homeScreenShown', App.scheduleSync);

App.off('syncScheduled', initialied);

App.trigger('initializationError', error);

});

Session.initialize();

};

This approach does allow us to list dependencies from top to bottom, and

doesn't take us into calllback hell, but it has some problems as well.

With the promise-returning and callback-passing approaches, each function was

loosly coupled to the caller, returning a promise or accepting success and

error callbacks. With this event emitter approach, we need to make sure both

the caller and called agree upon the names of events. Admittedly, most

functions are going to be more tightly coupled than these, regardless of the

approach, having to agree upon parameters to the called function and parameters

to the resolved promise or callback, but they don't need to share any special

information like the event name to determine when the called function is done.

This event emitter example also requires more work to clean up when an error

occurs.

Perhaps instead of using a single event bus, we could listen for events on multiple objects and standardize on event names. Events could be triggered on each function.

App.init = function() {

var initialized = App.init.trigger.bind(App.init, 'initialized');

var error = function(error) {

App.init.trigger('error', error);

Session.initialize.off('done', App.manageAppCache);

Session.initialize.off('error', error);

App.manageAppCache.off('done', App.initializeModelsAndControllers);

App.manageAppCache.off('error', error);

App.initializeModelsAndControllers.off('done', App.showHomeScreen);

App.initializeModelsAndControllers.off('error', error);

App.homeScreenShown.off('done', App.scheduleSync);

App.homeScreenShown.off('error', error);

App.scheduleSync.off('done', initialized);

App.scheduleSync.off('error', error);

};

// Assume every function mixes in BackboneEvents

Session.initialize.once('done', App.manageAppCache);

Session.initialize.once('error', error);

App.manageAppCache.once('done', App.initializeModelsAndControllers);

App.manageAppCache.once('error', error);

App.initializeModelsAndControllers.once('done', App.showHomeScreen);

App.initializeModelsAndControllers.once('error', error);

App.homeScreenShown.once('done', App.scheduleSync);

App.homeScreenShown.once('error', error);

App.scheduleSync.once('done', initialized);

App.scheduleSync.once('error', error);

Session.initialize();

};

This is very verbose, containing a lot of boilerplate. We could create a function to create these bindings, e.g.

var when = function(fn, next, error) {

BackboneEvents.mixin(fn);

fn.once('done', next);

fn.once('error', error);

};

when(Session.initialize, App.manageAppCache, error);

...

But it doesn't feel like we're on the right path. We'd still have to handle

unbinding all of the error handlers when an error occurs in one of the

functions.

It's also worth pointing out that using an event emitter isn't really

appropriate in a case like this where you want to get an async response to a specific

function call. If the called function can be called multiple times, you don't

know that the event that was triggered was in response to your call without

passing additional information back with the event, and then filtering.

There's Got To Be A Better Way

Unsatisfied with any of the alternatives to promises I came up with, I replied

to Henrik's tweet, and he said that he uses Async.js and Node's error-first

convention. So let's look at what using async.series would

look like.

App.init = function(callback) {

async.series([

Session.initialize,

App.manageAppCache,

App.initializeModelsAndControllers,

App.showHomeScreen,

App.scheduleSync,

], callback);

Finally, we've come to an alternative solution that feels equivalent to the

promise one. The async.series solution has at least one benefit

over the promise-chaining one: the Async library has a separate function,

async.waterfall, to use when the return value from one function

should be passed as a parameter to the next function in the series. In my

original solution, it's not clear without looking at the function definitions

whether the return value from each function is used by the next function.

Given the simple error handling in App.init, in which an error anywhere in the chain aborts the flow, and a single error handler function is responsible for handling the error from any of the functions, Async.js would be a decent replacement.

But for a more complicated flow, a promise-based approach would

probably come out on top. For example, here's what

App.initializeModelsAndControllers looks like:

App.initializeModelsAndControllers = function() {

return Base.init()

.then(Base.sufficientlyLoadedLocally)

.then(App.handleUpgrades, App.remoteInit)

.then(App.loadControllers);

};

This function introduces a fork in the chain of serialized functions:

- Initialize the models

- Check whether the app is ready to start up offline

- If so, kick off any upgrade processing necessary

- If not, start up the remote initialization process

- Initialize the controllers

It looks like this could be done with async.auto,

but it would probably start getting messy.

Tue, 30 Sep 2014

Changing the default browser in Debian

Note to self: the next time you want to change your default browser, here's how.

$ xdg-mime default chromium.desktop x-scheme-handler/http $ xdg-mime default chromium.desktop x-scheme-handler/https $ xdg-mime default chromium.desktop text/html $ sudo update-alternatives --set x-www-browser /usr/bin/chromium

Tue, 09 Sep 2014

Building Multi-Container Apps with Panamax

CenturyLink released a new open source tool for building and managing applications composed of multiple docker containers called Panamax. It's similar to fig in providing a file format for describing the docker images which make up your application, and expressing the links between the containers.

The files used by Panamax to describe an application are called templates, and Panamax expands upon the model provided by fig by allowing applications to be built from existing templates, i.e. collections of existing images, and by providing a web interface for building templates. Templates can be fetched from and export to any github repo.

You create a new Panamax application by using an existing template or starting with a single docker image from Docker Hub.

Building an app using a template

Below I provide an example of how to build an application using a Panamax template. I created a template for the Cube event-logging and analysis server. (There's also a copy of the template in the panamax contest repo, but it was built using a MongoDB image which has since been deleted from Docker Hub.)

We'll create a simple Hello World node.js app, which logs each request to the cube server.

Install Panamax

First, install Panamax as

described in the documentation. The current version of Panamax is distributed

as a Vagrant VM, running CoreOS. Panamax itself is three docker apps

that run in the VM: an API server, a web app that provides the main interface,

and cAdvisor, used to monitor

the docker containers. Panamax also includes a shell script,

panamax, which is used to start up the VM.

Add a Panamax Template Source

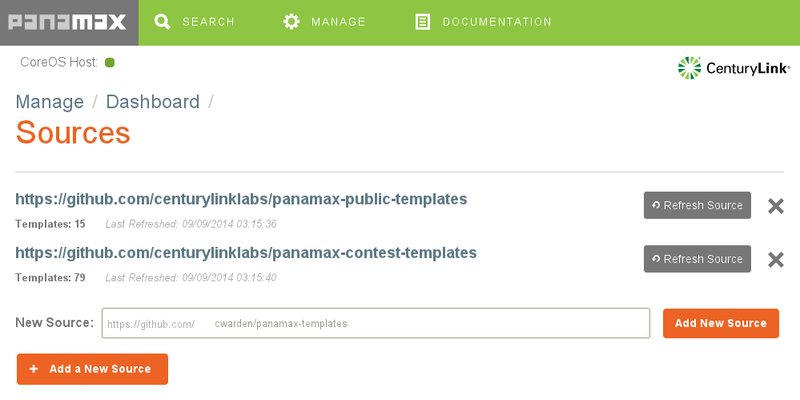

Panamax is distributed with two github repos containing templates. Navigate to

Manage | Sources to add my cwarden/panamax-templates repo as a

source of templates.

Searching for Panamax Templates and Docker Images

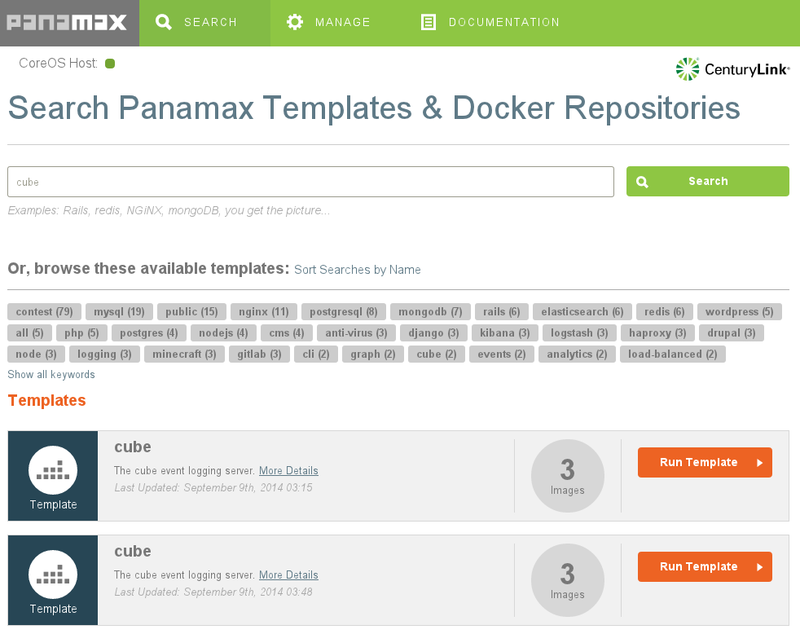

Now, on the search screen, if you search for "cube", you'll find my template.

(The first one is from the contest repo.)

Below the templates, you'll also find individual docker images. If you wanted to build up your application starting from a single image, you could start with one of these.

Click on More Details, and you'll see that my template is made up of three docker images: a MongoDB database, the cube collector, which accepts events and stores them in the database, and the cube evaluator, which reads data out of MongoDB and computes aggregate metrics from the events.

The details modal window also shows documentation I wrote up when creating the template.

Creating an Application

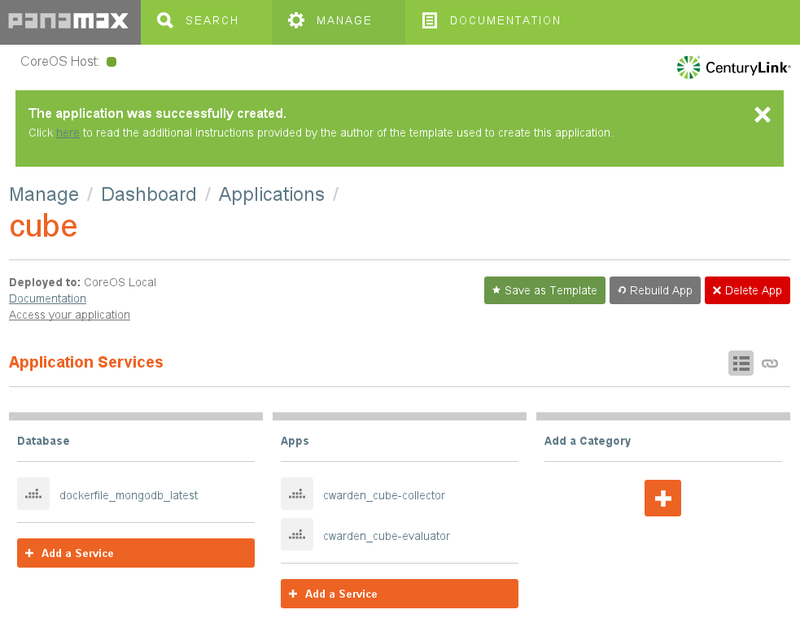

Click on Run Template. This will create a new application called "cube".

The Documentation link will show the same notes as on the More Details screen. The Port Forwarding section is important. Recall that Panamax and all of the docker containers it manages are running within a VM. If we want to access any of the services provided by these containers from outside the VM, we need to set up port forwarding.

In this case, we're going to add another docker container which sends data to the cube collector, but we'll want to access the cube evaluator from our host machine to make sure the logging is working correctly, so we need to set up port forwarding to VM for the evaluator:

$ VBoxManage controlvm panamax-vm natpf1 evaluator,tcp,,1081,,1081

(Instructions for sending data to the collector from the host machine are also included in the documentation.)

Prepare An App To Use With The Template

We'll use Valera Tretyak's simple hello world app for our application, making a small change to log each request to the cube collector.

Then we can create a docker image for this app and upload it to the Docker Hub.

$ docker build -t cwarden/hello-cube . $ docker push cwarden/hello-cube

Extending The Template To Create a New Application

Now, let's actually use the template as a template by adding our new image as

another container. From Manage | Manage Applications | cube, let's add the

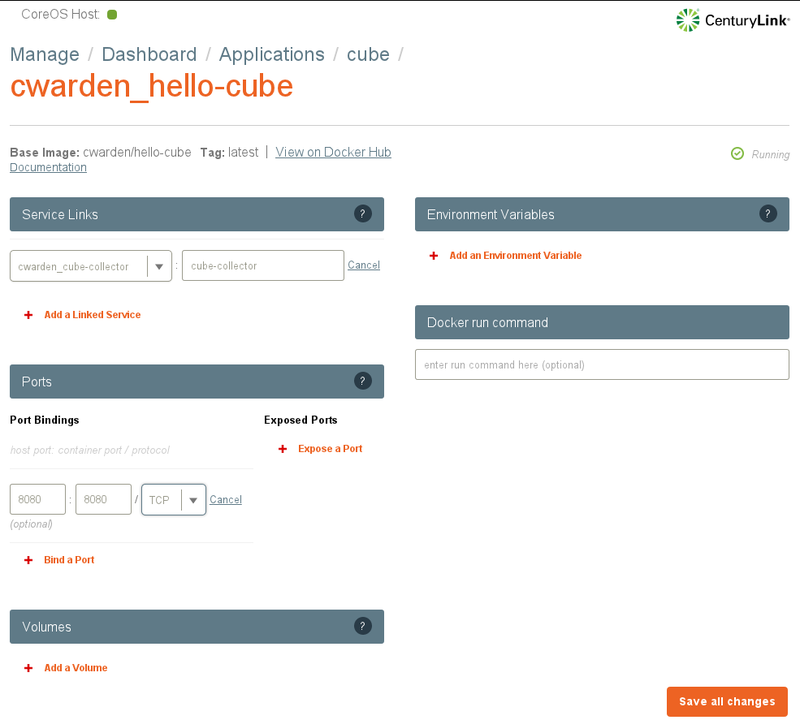

hello-cube service.

Next, we need to link the new container to the cube collector so it knows the

hostname and port to use when sending events. We also need to expose the

container's port to the VM. Click on the hourglass next to the hello-cube app.

And we need to expose the VM's port to the host machine.

$ VBoxManage controlvm panamax-vm natpf1 hello,tcp,,8080,,8080

Using The New App

When we access the app on localhost:8080, it will send an event to the cube collector. We can use the cube evaluator to monitor the number of events being generated.

Exporting An App As A Template

Now that we've finished building our app, we can export the app as a new template to a github repo using the Save as Template button.

A Convoluted Solution to Google Drive for Linux

I need to use Google Drive to share files with coworkers. I tried using grive, but it crashed regularly.

The solution I came up with uses VirtualBox, a Windows 7 image, Google Drive for Windows, a tool from Microsoft called SyncToy, and the Windows task scheduler.

- Install VirtualBox

- Install a Windows image using ievms

- Share a folder from your linux host to your windows guest, e.g. /home/user/GDrive

- Install Google Drive in Windows, and configure to sync to your hard drive image, e.g. C:\Users\IEUser\Google Drive, not the shared drive; Google Drive won't sync to a networked drive.

- Install SyncToy, and configure it to sync between your Google Drive location and your shared drive, e.g. \\VBOXSVR\GDrive. Use the network name rather than the drive letter assigned to the shared drive for scheduled syncing to work.

- Create a scheduled task to run as IEUser whether the user is logged in or not (you don't need to run with the highest privileges). Set the trigger to one time, and repeat every 5 minutes indefinitely. Set the action to run "C:\Program Files\SyncToy 2.1\SyncToyCmd.exe" with the -R argument.